The AI Exposure Question Every Provost Should Be Asking Right Now

Most conversations about AI in higher education are happening in the wrong room.

They are happening in IT. In faculty governance. In student affairs. In committees formed specifically to produce a policy.

They are not happening in the room where academic portfolio decisions get made. And that silence has a cost — in relevance, in employer confidence, and in strategic flexibility that disappears quietly before anyone notices.

What AI exposure actually means for your programs

AI exposure is not about whether your students are using AI tools in the classroom. It is about whether the skills and tasks your programs are training students to perform are being automated, augmented, or redefined by AI in the labor market that those students are entering.

A program can have a rigorous AI use policy and still be preparing students for roles that employers are quietly restructuring. The policy question and the portfolio question are not the same question.

The World Economic Forum's Future of Jobs Report 2025 found that employers expect 39% of key job skills to change by 2030. But that average obscures what matters most: which programs, at which institutions, are sitting in the path of disruption, and which are positioned to benefit. An average does not tell a provost where to act.

Students are already sensing the unevenness. The Lumina Foundation-Gallup 2026 State of Higher Education Study found that 42% of bachelor's degree students and 56% of associate degree students say AI has caused them to rethink their field of study, and 16% have already changed their major because of it. Students are drawing their own conclusions about which credentials are durable. The question is whether their institutions are asking the same question with better data.

Three questions worth asking about every program

These are not questions for a program review cycle. These are questions for a portfolio conversation that most institutions are not yet having.

Is this program training students for tasks that AI is automating, or skills that AI is augmenting?

Automation and augmentation are not the same thing. The primary impact of generative AI on skills may lie in augmenting human capabilities through human-machine collaboration rather than outright replacement. But that plays out differently across disciplines. A program built around document review, data entry, or routine analysis looks different today than it did three years ago. A program built around judgment, synthesis, and interpretation is in a different position.

The Tufts Digital Planet American AI Jobs Risk Index estimates that 9.3 million U.S. jobs are at risk of displacement under a median adoption scenario, with approximately $757 billion in household income at stake annually. The concentration is not in manufacturing or physical labor. It falls on Information (18% job loss risk), Finance and Insurance (17%), and Professional, Scientific, and Technical Services (16%) — sectors that many academic programs directly serve. Your portfolio almost certainly has programs in these categories. The question is which ones, and how exposed they actually are.

What do the employers who hire your graduates say about what they need now?

Not what they said in your last employer survey. What they are saying now, in direct conversations, about the skills they are hiring for, the tasks they are automating, and the gaps they are seeing in recent graduates. The AI Workforce Consortium reported in late 2025 that nearly four out of five information and communications technology roles now require some form of formal AI skills — a threshold most curriculum cycles were not built to reach in time. Labor market data tells you what happened. Employer relationships tell you what is happening.

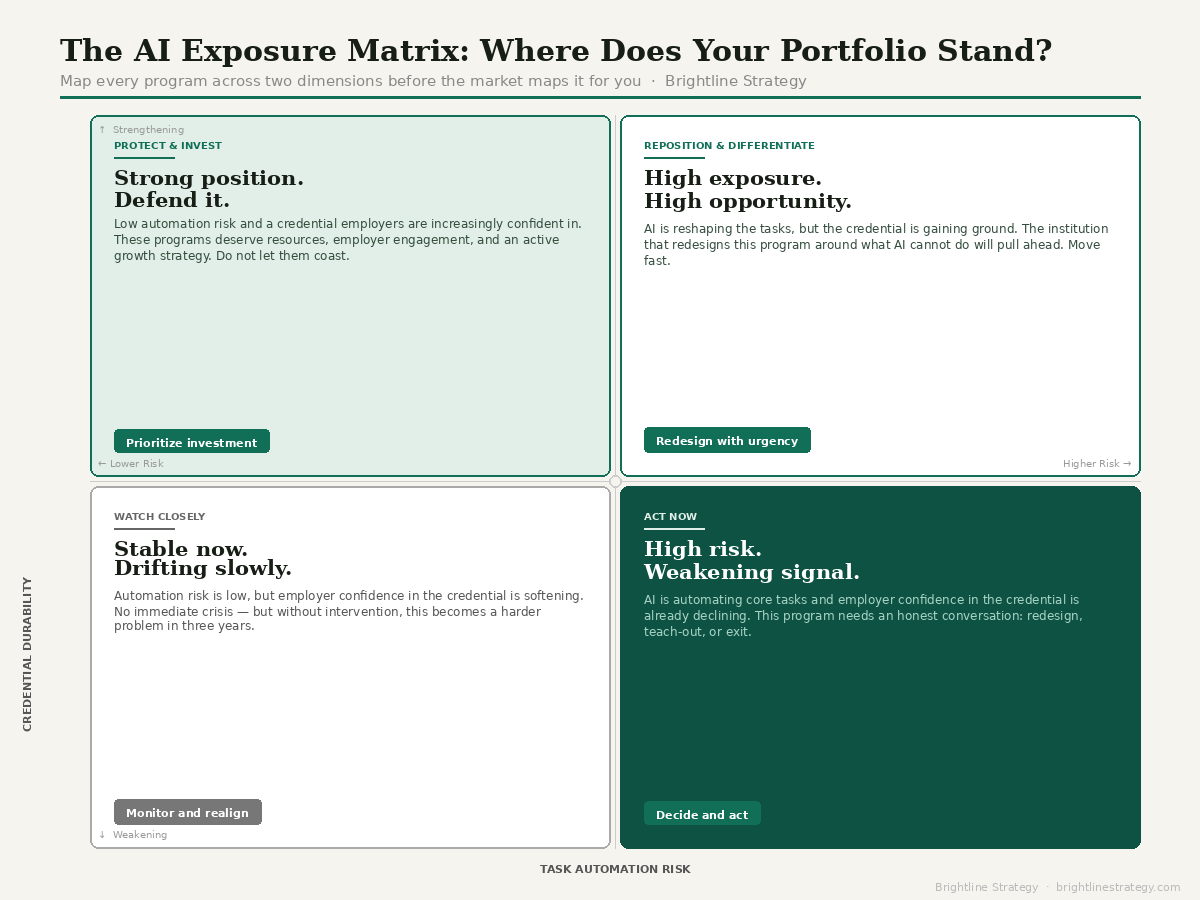

If AI changes the nature of this work over the next 3 years, will this credential still retain its value?

This is the sharpest version of the question. It moves past enrollment numbers and completion rates and asks what the credential will signal to employers as the field evolves. Some credentials are gaining ground as AI reshapes the workforce. Others are losing employer confidence before the enrollment data reflects it — and by the time it does, the institution is already behind.

Where the question gets stuck

The most common failure is not resistance. It is that the question never gets formally asked. AI exposure tends to be handled at the program level by faculty or department chairs, without anyone reviewing the full portfolio.

Institution-wide AI adoption in higher education surged from 49% in 2024 to 66% in 2025. But adopting AI in operations and administration is a different thing from examining what AI is doing to the labor markets your graduates are entering. Institutions can be deeply engaged with AI internally while their academic portfolio goes unexamined.

The provosts who get ahead of this are not the ones with the most sophisticated AI strategies. They are the ones who have made the question explicit — who owns it, how often it gets asked, and what it would take to answer it honestly.

A question that belongs at the top

Every program in your portfolio sits somewhere on the spectrum from high to low AI exposure. Most institutions do not have a clear picture of where their programs sit. Building that picture is not a technology initiative. It is not a faculty governance process. It is a decision about whether academic leadership is willing to ask a hard question before the market asks it first.

Institutions that make that decision now will have options. Those who wait will be managing consequences.